Lead Scoring (HubSpot): Shared Frontend Architecture and Product Ownership

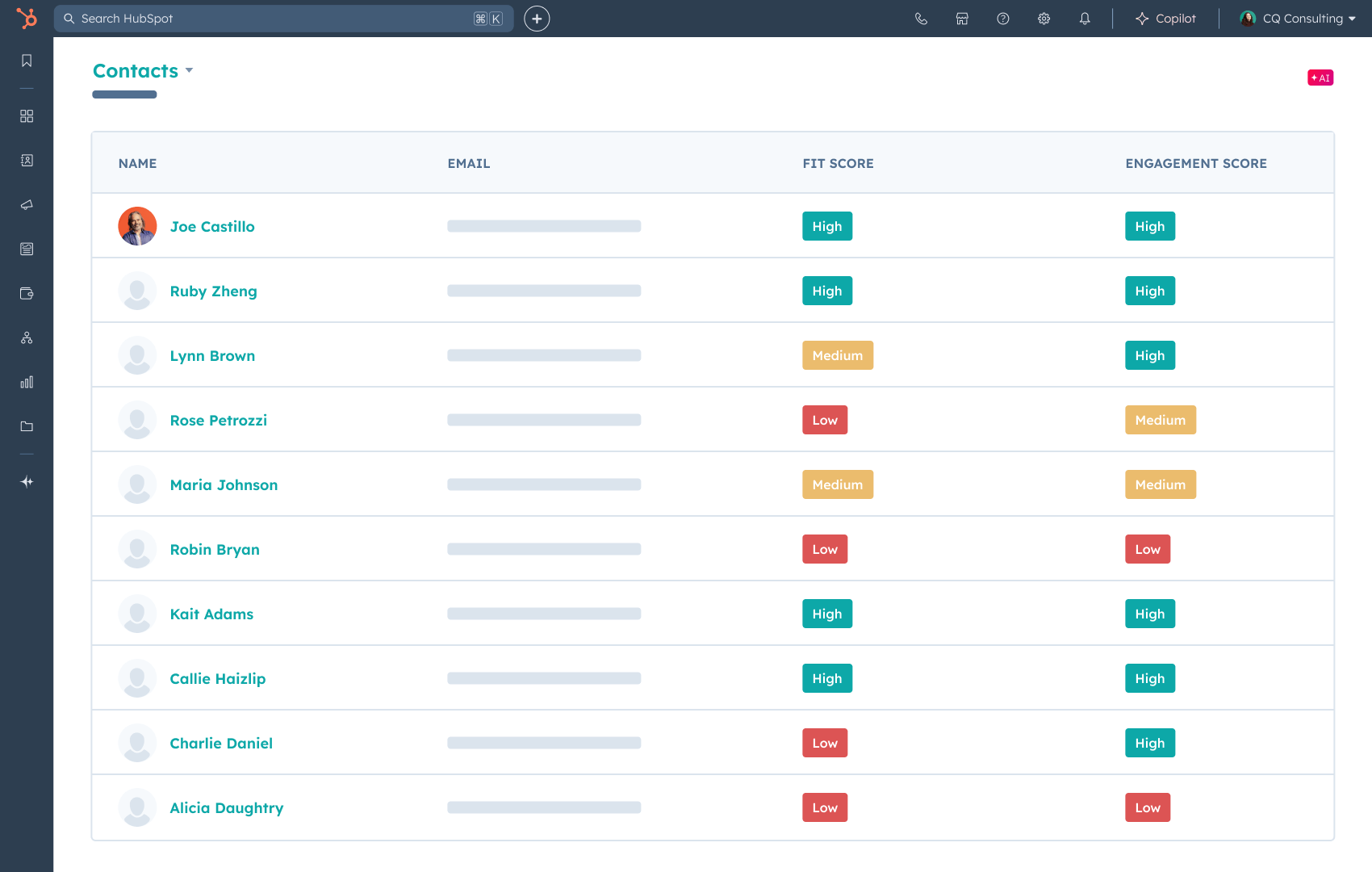

Productizing lead scoring across HubSpot’s platform through shared frontend architecture, direct ownership of the Marketing Lead Scoring application, and delivery of reliability-critical features used daily at scale.

View productContext

Lead Scoring is a core HubSpot capability spanning Marketing, Sales, and Service. Scores directly drive automation, routing, and prioritization workflows, making correctness and reliability essential.

The frontend is not just configuration UI. It is the primary interface where non-technical users encode business logic that affects downstream systems at scale.

In addition to shared platform architecture, the team owns the Marketing Lead Scoring application, a high-usage customer-facing product tightly coupled to backend scoring systems.

Problem / Constraints

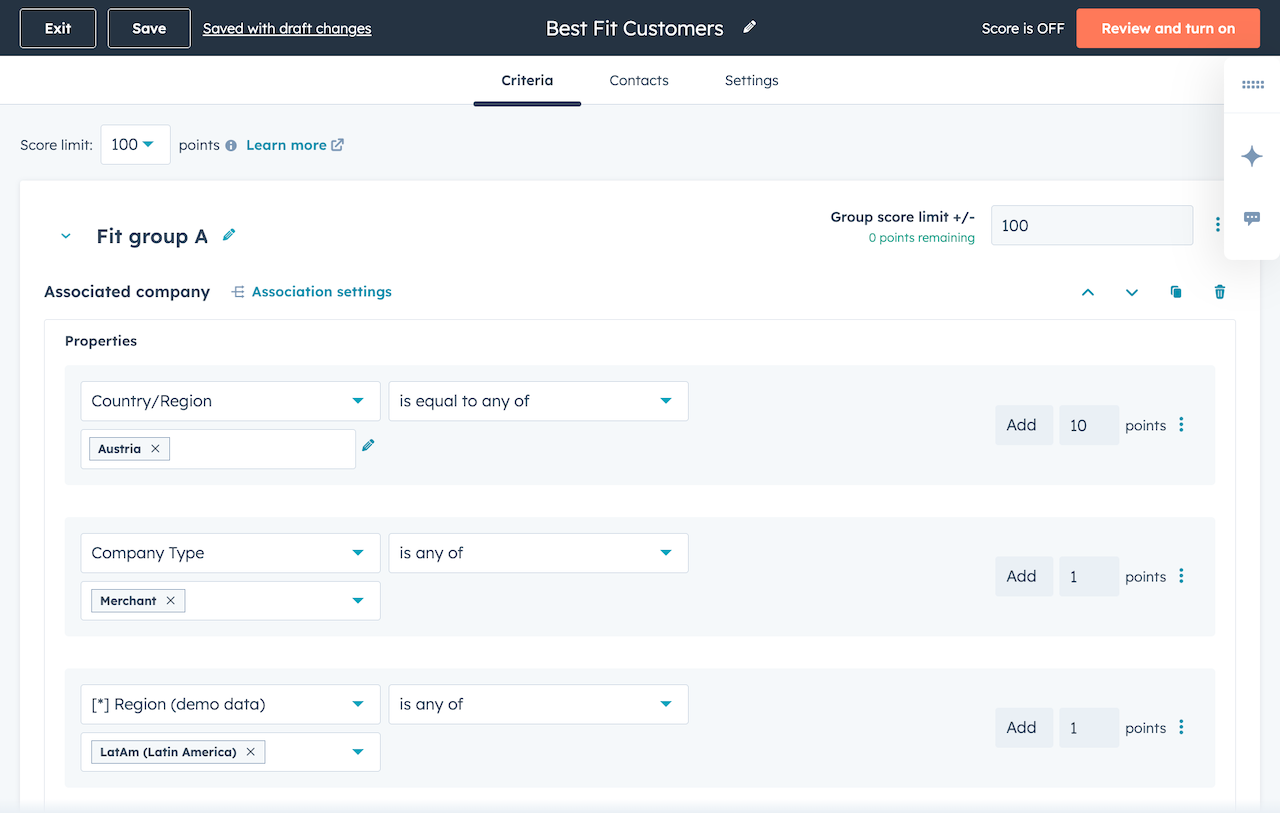

Scoring needed to remain flexible and extensible without fragmenting across product surfaces, while the Marketing Lead Scoring app required frequent iteration without breaking existing customer workflows.

A major migration blocker was support for advanced conditional scoring (AND logic), representing ~10–15% of scoring requests and tied directly to customer retention and revenue risk.

The UI had to encode advanced concepts like frequency windows, validation rules, and historical scoring behavior in a way non-technical users could reason about. Frontend correctness failures could cause real customer harm through misrouted leads or broken automation.

Legacy constraints and cross-team dependencies also limited how aggressively the system could change, making safe evolution and integration discipline core engineering concerns.

Ownership & Scope

As a senior engineer on the Lead Scoring frontend framework team, I owned both shared platform abstractions and direct product delivery for the Marketing Lead Scoring app.

My scope included frontend architecture and shared primitives used across scoring surfaces; end-to-end delivery of high-impact Marketing Lead Scoring features; cross-surface consistency and integration safeguards; and reliability, testing, and performance infrastructure for scoring workflows.

I coordinated closely with Backend, Product, and Design on delivery-critical initiatives, surfaced tradeoffs early, and adjusted scope to preserve migration timelines.

This work spanned design, implementation, rollout, analytics instrumentation, and post-launch reliability.

Key Features Delivered

Conditional Scoring (advanced AND logic). Led the end-to-end frontend architecture for a migration-critical capability. Designed the ComplexCriterion system and a dual-ID strategy to preserve data integrity while supporting complex criteria composition, editing, and rollout-safe backend integration.

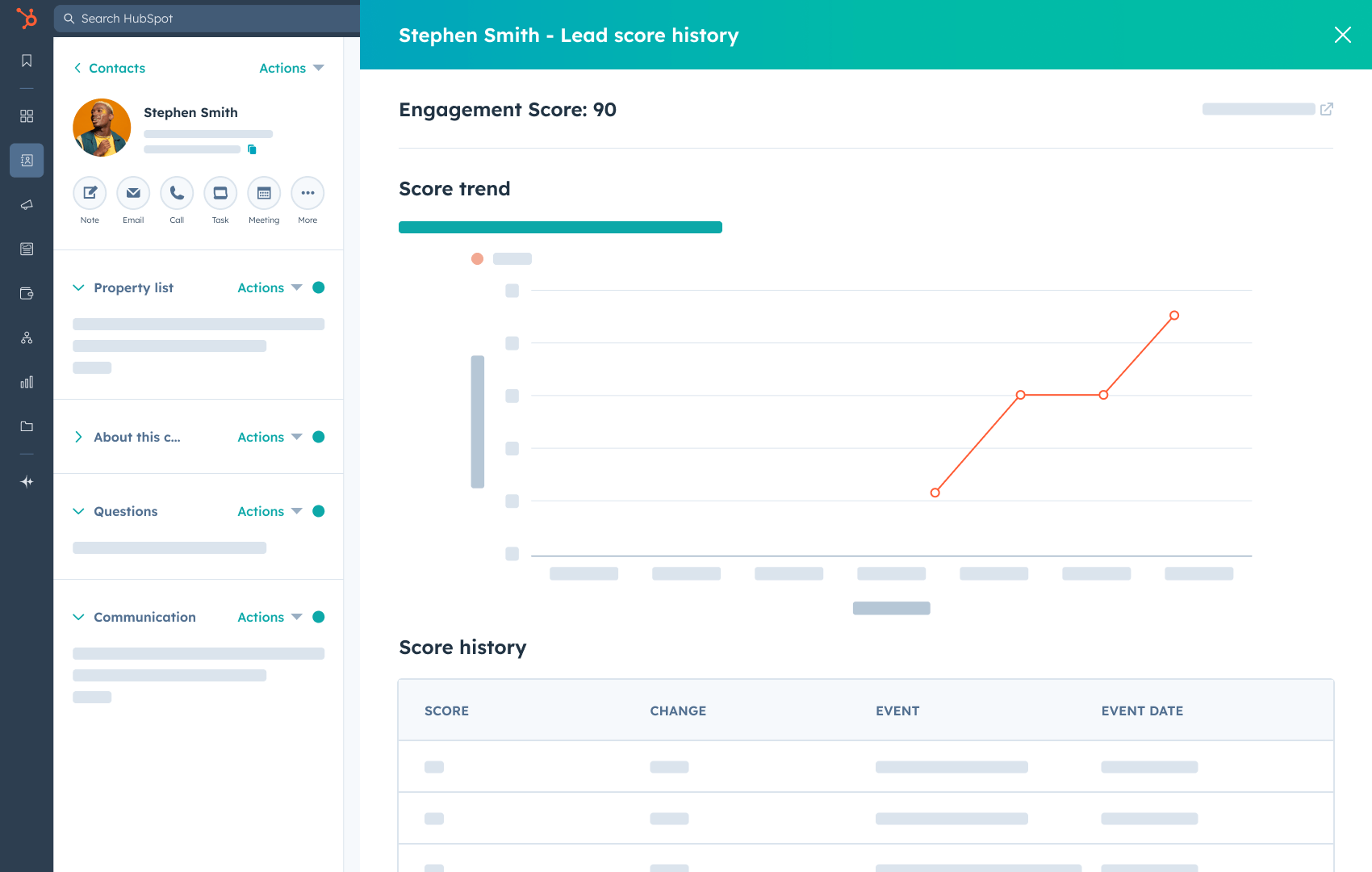

Frequency v1 / v2 / v3. After early versions caused validation friction, I used analytics and user research to help drive a pivot from strict validation to a smarter suggestion model, improving flexibility while reducing configuration pain.

Group-wide Color Token Standardization. Initiated and drove a standardization effort across scoring repositories and API consumers after log analysis surfaced data quality and support overhead issues, improving consistency across internal systems and customer-facing contracts.

Trellis UI Migration. Migrated 100+ components in coordination with the design system team, preserving behavior while improving long-term maintainability and consistency.

Reliability, Testing, and Performance. Overhauled acceptance test stability and ownership, drove flaky test recovery, and diagnosed frontend memory and performance issues. Reduced network calls by ~40% and object creation by ~30% for key components.

Key Decisions & Tradeoffs

I consistently prioritized correctness, debuggability, and shared guardrails over surface-specific shortcuts.

For conditional scoring, I proposed a dual-ID strategy to separate UI lifecycle concerns from persisted criteria identity. This added upfront schema and migration complexity but materially improved reliability and became the standard pattern for newer criteria components.

I treated analytics instrumentation as part of the architecture rather than a post-launch task. This increased upfront work but enabled faster diagnosis of UX friction and supported a safe pivot to the Smart Suggestion model.

I also made proactive scope cuts and sequencing adjustments when cross-team dependencies threatened schedules, preserving the migration-critical path without compromising correctness.

AI-Driven Development & Team Enablement

Pushed AI-assisted development aggressively within the team, driving real production usage of Cursor and Claude for implementation, debugging, and refactoring.

Built custom AI workflows to accelerate development, stabilize tests, and reduce documentation overhead. Used rapid AI iteration to prototype and validate error-handling and UX patterns during feature redesigns.

Led internal talks on AI-driven testing, performance debugging, and development workflows, helping teams apply these techniques to legacy systems with low tribal knowledge.

This work materially improved development velocity and expanded how the team approached complex problems.

Outcome / Impact

Launched Conditional Scoring on schedule as a migration-critical capability, with 230 customer enrollments in the first 24 hours of beta, helping unblock a key platform transition and reduce migration risk.

Delivered multiple high-impact features in the Marketing Lead Scoring app while establishing a shared frontend foundation used across HubSpot scoring surfaces.

Improved reliability, test confidence, and performance for critical workflows, enabled safer iteration on complex scoring logic, and reduced support and operational overhead through standardization.

The result was not just feature delivery, but a scoring system that can continue to evolve safely at platform scale.